ARCHIVE ID

CT-CPN-2024-03

CATEGORY

CyberTools

STATUS

Active

CONDITION

Operational

COMPUTEPEN

Computational Output Manipulation Precision Unit Tactile Enabling Processing Engagement Navigation

Analysis

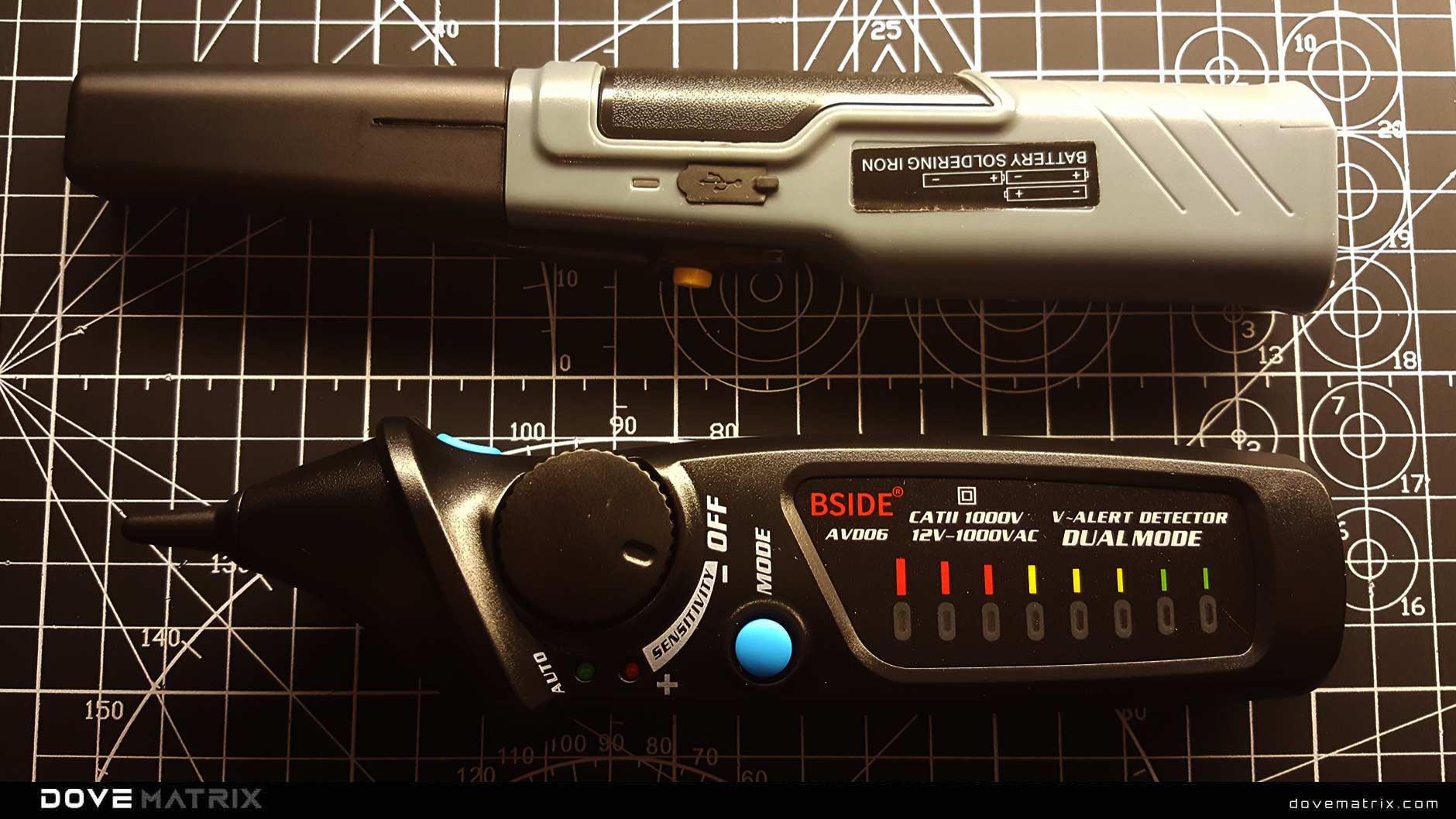

COMPUTEPEN Tool Analysis Structure

Advanced overlay visualization revealing gesture tracking patterns and sensor activation zones across the device. Multiple diagnostic layers show pressure response curves and tilt sensitivity ranges.

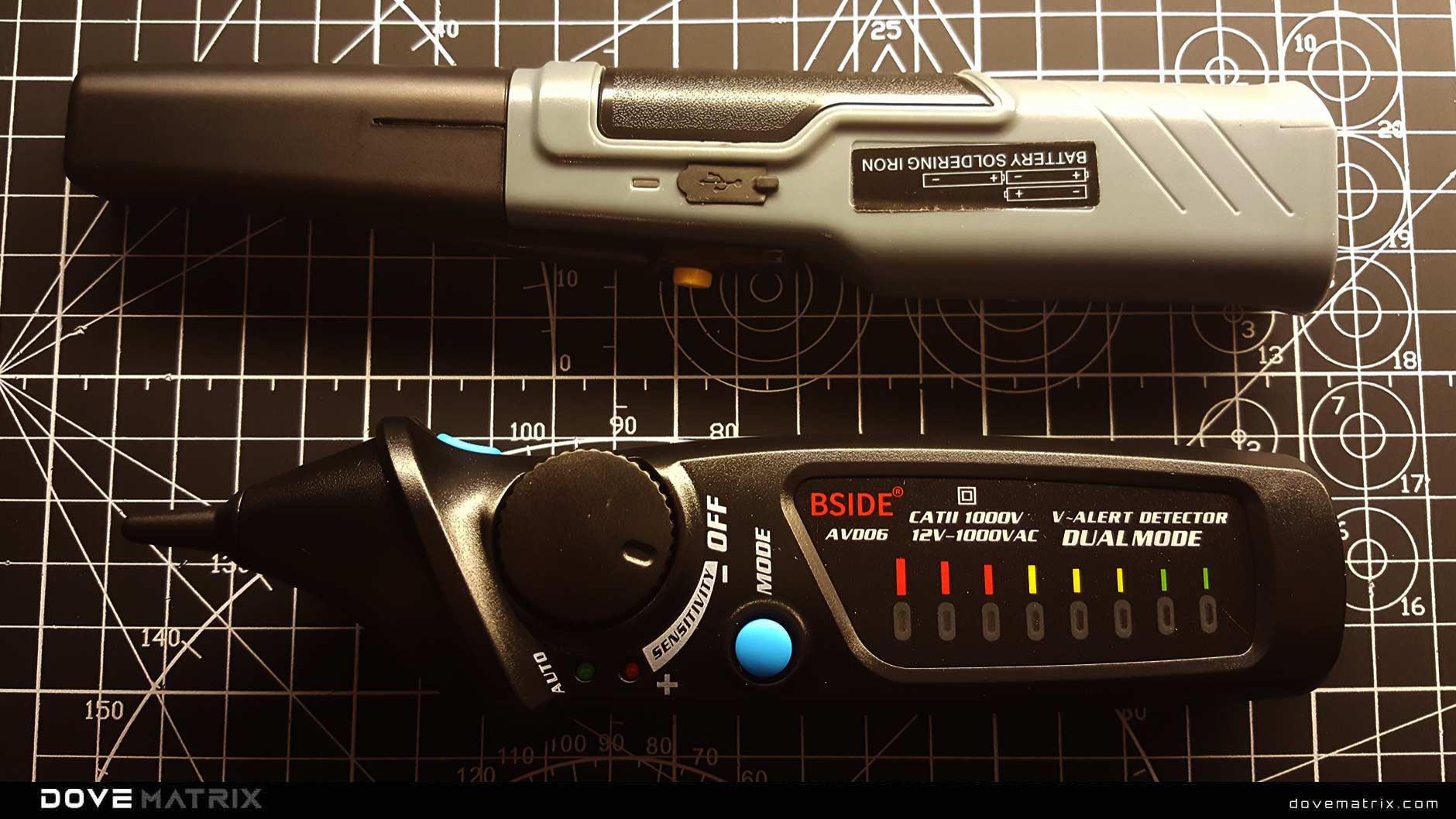

COMPUTEPEN Tool Analysis Energy

Standard diagnostic mode displaying the COMPUTEPEN in its primary operational state. Pressure sensors, tilt detection arrays, and integrated processing visible for baseline analysis.

COMPUTEPEN Tool Analysis Signal

Internal sensor arrays and processing pathways analysis exposing the eight-axis motion tracking system and embedded microprocessor. Shows integrated sensor network and signal processing routes.

Profile

Overview

COMPUTEPEN is a computational drawing instrument designed to translate physical gestures into digital commands with full processing awareness. Unlike standard stylus devices, COMPUTEPEN operates as both an input tool and analytical processor for real-time gesture interpretation.

The device integrates eight-axis motion tracking into a natural drawing form factor, enabling precise capture of artistic and technical gestures. Features pressure sensitivity across 4096 pressure levels, tilt detection with 60-degree range in all directions, rotation tracking for full orientation awareness, velocity sensing for stroke dynamics, embedded microprocessor for real-time data transformation, and programmable response curves for adaptive input.

Architecture

The COMPUTEPEN operational architecture employs a multi-sensor gesture capture system that processes physical motion through parallel tracking channels. Core functions include continuous sensor polling at 200Hz, real-time gesture recognition algorithms, pressure-to-digital conversion, tilt vector calculation, and output data packetization.

Activation is instantaneous upon surface contact detection. The device maintains continuous tracking of all motion parameters, processing gesture data through embedded algorithms before transmission to connected systems, ensuring low-latency response for natural drawing feel.

Behavior

Stylus calibration requires periodic verification of pressure response curves and tilt accuracy to maintain drawing precision. Primary calibration involves pressure zero-point adjustment, maximum pressure limit setting, tilt angle verification across all axes, and rotation sensor alignment validation.

Individual user hand calibration adapts response curves to personal drawing styles. Calibration cycles are recommended after every 100 operational hours or when switching between different user profiles to ensure optimal gesture interpretation accuracy.